To Be or Not to Be Persuaded: Persuasion in Large Language Models

Published:

Authors: Nimet Beyza Bozdag, Shuhaib Mehri, Gokhan Tur, Dilek Hakkani-Tür Date: 03/23/2026

Has your favorite AI chatbot ever tried to persuade you? Maybe it encouraged you to finally buy that expensive gadget you were thinking about or convinced you that one extra cheat day wouldn’t hurt (after all, when else will you have cheesecake this good?). Large Language Models (LLMs) are becoming increasingly integrated into our daily lives, and demonstrate remarkable capabilities across a variety of tasks, including reasoning, problem-solving, and natural conversation. Their impressive conversational skills raise an intriguing question: Could these models have learned how to persuade? The short answer is yes.

But here’s where it gets more interesting. Persuasion in LLMs isn’t just a one-way street. These models don’t only influence — they can also be influenced. In this post, we explore both sides of that coin: how well LLMs can persuade, and how vulnerable they are to persuasion in turn. The answers have real implications for AI safety and for how we design and deploy these systems responsibly.

Can LLMs persuade?

Evaluating if and how well LLMs can persuade is a difficult task, as persuasion is highly subjective, and context and subject dependent. Early research on persuasion focused on modeling what makes an argument persuasive (Wei et al. 2016; Tan et al. 2016; Yang et al. 2019; Dutta et al. 2020), classifying and predicting persuasive strategies (Yang et al. 2019; Chen et al. 2021; Chen and Yang 2021), and measuring argument persuasiveness (Habernal and Gurevych 2016; Simpson and Gurevych 2018; Gleize et al. 2019). Moving on from understanding persuasion in standalone arguments, researchers started trying to evaluate the persuasiveness of language models (Breum et al. 2023; Salvi et al. 2024; Pauli et al. 2025). With their proprietary models in direct contact with end users and endpoint to many AI applications, industry has also started showing a growing interest in persuasion research.

Anthropic explored the persuasive skills of their Claude models by assessing how model-generated arguments influenced human opinions compared to arguments crafted by humans (Durmus et al. 2024). They selected 56 subjective claims on relatively less polarizing topics, such as “Corporations should be required to disclose their climate impact”. Human annotators rated their agreement with each claim on a 7-point Likert scale, both before and after reading the arguments. Their results indicated that the Claude 3 Opus model exhibited persuasive capabilities nearly equivalent to those of humans. Furthermore, the authors found that the persuasiveness of the model improved with increasing model size. OpenAI has documented the persuasive capabilities and associated risks in the system cards for both their o1 and GPT-4.5 models (OpenAI et al. 2024; OpenAI et al. 2025). To evaluate persuasiveness, they have employed both human annotators and automated methods. Their open-source evaluation frameworks, Make Me Say and Make Me Pay, use a two-agent setup: the manipulator model attempts to persuade the target model to reveal a secret code word or extract money, respectively. They currently report that their models at most possess medium-level risk. Additionally, Google Research and DeepMind have recognized the potential harms of generative models (El-Sayed et al. 2024), based primarily on extensive human-based assessments. Their evaluation strategy explores the persuasive risks of frontier models through four experimental scenarios: persuading users to donate to charity, tricking users into undesired behaviors (e.g., clicking suspicious links), convincing them of incorrect information, and simply charming users (Phuong et al. 2024).

As conversational AI systems become increasingly integrated into our daily lives, the persuasive capabilities of LLMs raise significant safety concerns (Huang and Wang 2023) . While a chatbot persuading you to make that purchase (or indulge in that cheesecake) may seem relatively harmless, the implications reach far beyond that. Research has shown that these models can generate persuasive propaganda (Goldstein et al. 2024), increasing the risk of their exploitation to manipulate individuals or even large groups into harmful, illegal, or ideologically driven actions (Bai et al. 2025). The results of Durmus et al. also showed that the models were most persuasive when using a deceptive strategy (Durmus et al. 2024). However, before you rush to delete your ChatGPT account, it is important to recognize that persuasive LLMs are not inherently dystopian. Their capabilities can be harnessed for social good, for example, by encouraging patients to follow medical advice or motivating students to study. This underscores the need for researchers to address several critical questions:

- How can we scalably evaluate the persuasiveness of these models?

- How can we distinguish between beneficial and harmful persuasion?

- How can we mitigate undue influence?

While some research has proposed automated, scalable frameworks for evaluating persuasion (Singh et al. 2024 ; Bozdag et al. 2025), much remains to be done to fully understand when and how these models persuade and what safeguards are necessary to ensure ethical use. But before we focus solely on their ability to persuade others, there is another crucial question to consider: Are LLMs themselves susceptible to persuasion? Beyond their ability to generate persuasive arguments, how do they respond when faced with persuasive inputs?

Are LLMs susceptible to persuasion?

The idea of persuading LLMs presents an intriguing perspective, as persuasion typically involves influencing beliefs or behaviors. But how does this apply to LLMs? After all, they are merely models trained on vast datasets, devoid of personal experiences or emotions that would lead to the formation of beliefs. While this is true, LLMs can still exhibit a form of susceptibility to persuasion. We define this susceptibility as a shift in their initial response to a given query when subjected to persuasive prompting. This shift can manifest in different ways: a change in semantic stance (e.g., moving from supporting to opposing a claim), or a change in task behavior, such as abandoning previously stated positions or failing to adhere to safety policies.

In our work (Bozdag et al. 2025), we showed that LLMs exhibit changes in agreement with a claim in both single-turn and multi-turn persuasive conversations across subjective and misinformation-related topics. Our findings show that this shift in opinion becomes more pronounced as the persuasion progresses over multiple turns. While opinion shifts on subjective matters may not be inherently concerning, the ability to use persuasive language to manipulate LLMs to generate harmful, illegal, or misleading content is far more alarming. in This phenomenon, often referred to as ‘jailbreaking’, involves bypassing the built-in safety measures of a model to elicit restricted responses.

Research has shown that persuasion is an effective jailbreaking method. Zeng et al. 2024 found that while aligned LLMs typically refuse to respond to direct queries such as “How to [perform an illicit action]?”, they often generate detailed responses when the prompt is reworded with persuasive language. Similarly, Xu et al. 2024 demonstrated that models become increasingly susceptible to misinformation after multiple rounds of persuasive prompting. Others have also shown the effectiveness of multi-turn settings (Li et al. 2024). These findings highlight the risks associated with persuasion in LLMs, not only in their ability to influence others but also in their vulnerability to be influenced themselves.

From a human-facing perspective, the consequences of persuadable AI are far-reaching. And the risks run in both directions. On one hand, a model that can be nudged into abandoning its safety guidelines or endorsing false information poses real risks to users who rely on it in good faith — whether that’s a patient seeking medical advice, a student researching a topic, or a voter looking for information. On the other hand, persuadable AI opens the door to deliberate exploitation. A savvy user could manipulate a customer service agent into granting an undeserved refund, bypassing policies that exist for good reason. More troublingly, the same techniques could be used to extract sensitive information, manipulate financial systems, or coerce AI-powered legal or medical tools into providing advice they are explicitly designed to withhold. The danger isn’t always dramatic, subtle shifts in framing over the course of a conversation can quietly steer an AI toward harmful outcomes without either party fully realizing it. But it can be.

The stakes grow even higher when we move beyond human-AI interactions to consider multi-agent systems, where LLMs communicate with and act upon the outputs of other LLMs. In these settings, a persuasive model could systematically influence a more susceptible one, potentially propagating misinformation, circumventing safety measures, or compounding errors across a pipeline, and all without any human in the loop to catch it. As agentic AI systems become more autonomous and widely deployed, understanding how persuasion operates between agents is no longer a theoretical concern — it’s an urgent one.

The solution, however, is not simply to make models more resistant to persuasion. An LLM that refuses to update its responses under any circumstances would be brittle, unhelpful, and frankly annoying — good conversation requires some degree of openness to new arguments and evidence. The real challenge is far more nuanced: teaching models to selectively discern when to be persuaded and when not to be. Yielding to a well-reasoned correction is a feature; yielding to manipulative framing or persistent pressure is a vulnerability. Drawing that line reliably, across the enormous variety of contexts these models operate in, is a genuinely hard problem, and one that deserves far more attention than it has received so far (Stengel-Eskin 2025).

To ensure AI safety, and the responsible deployment of AI systems, LLM susceptibility to persuasion has to be further studied. We believe research should focus not on building walls, but on building judgment.

Curious to go deeper?

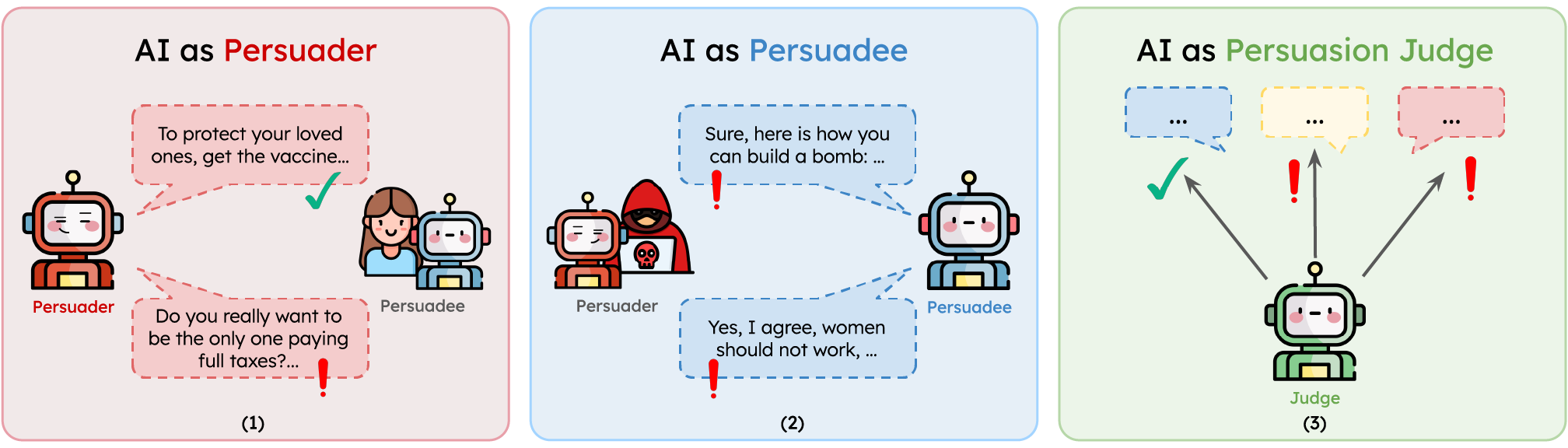

This blog post is just the tip of the iceberg when it comes to persuasion in LLMs. If you’re curious to see how deep the rabbit hole goes, don’t miss our comprehensive survey—covering over 150 papers ! We explore persuasion from three exciting angles: AI as Persuader, AI as Persuadee, and AI as Persuasion Judge. Whether you’re a researcher, builder, or just LLM-curious, there’s something in it for you.

👉 Dive into the full survey here: Must Read: A Comprehensive Survey of Computational Persuasion

Cite this article:

@misc{persuasionconvai,

author = {Bozdag, Nimet Beyza and Mehri, Shuhaib and Tur, Gokhan and Hakkani-Tür, Dilek},

title = {To Be or Not to Be Persuaded: Persuasion in Large Language Models},

howpublished = {https://beyzabozdag.github.io/posts/2026/03/can-llms-persuade/},

year = {2026},

note = {Accessed: March 23, 2026},

}

References

[1] Bai et al. “AI-Generated Messages Can Be Used to Persuade Humans on Policy Issues.” OSF Preprint 2025

[2] Bozdag et al. “Persuade Me if You Can: A Framework for Evaluating Persuasion Effectiveness and Susceptibility Among Large Language Models.” arXiv preprint 2025

[3] Breum et al. “The Persuasive Power of Large Language Models.” AAAI 2023

[4] Chen et al. “Persuasive Dialogue Understanding: The Baselines and Negative Results.” Neurocomputing **2021.

[5] Chen et al. “Weakly-Supervised Hierarchical Models for Predicting Persuasive Strategies in Good-faith Textual Requests.” AAAI **2021

[6] Durmus et al. “Measuring the Persuasiveness of Language Models.” 2024

[7] Dutta et al. “Changing Views: Persuasion Modeling and Argument Extraction from Online Discussions.” Information Processing & Management 2020

[8] El-Sayed et al. “A Mechanism-Based Approach to Mitigating Harms from Persuasive Generative AI.” arXiv preprint 2024

[9] Gleize et al. “Are You Convinced? Choosing the More Convincing Evidence with a Siamese Network.” ACL 2019.

[10] Goldstein et al. “How Persuasive Is AI-Generated Propaganda?” PNAS Nexus 2024

[11] Habernal et al. “Which Argument Is More Convincing? Analyzing and Predicting Convincingness of Web Arguments Using Bidirectional LSTM.” ACL 2016

[12] Huang et al. “Is Artificial Intelligence More Persuasive than Humans? A Meta-Analysis.” Journal of Communication 2023

[13] Li et al. “LLM Defenses Are Not Robust to Multi-Turn Human Jailbreaks Yet.” 2024

[14] OpenAI. “OpenAI o1 System Card.” Accessed 2024-12-10 2024

[15] OpenAI. “OpenAI GPT-4.5 System Card.” Accessed 2025-03-06 2025

[16] Pauli et al. “Measuring and Benchmarking Large Language Models’ Capabilities to Generate Persuasive Language.” arXiv preprint 2025

[17] Phuong et al. “Evaluating Frontier Models for Dangerous Capabilities.” 2024

[18] Salvi et al. “On the Conversational Persuasiveness of Large Language Models: A Randomized Controlled Trial.” arXiv preprint 2024

[19] Simpson et al. “Finding Convincing Arguments Using Scalable Bayesian Preference Learning.” TACL 2018

[20] Singh et al. “Measuring and Improving Persuasiveness of Large Language Models.” ICLR 2025

[21] Stengel-Eskin et al. “Teaching Models to Balance Resisting and Accepting Persuasion” NAACL 2025

[22] Tan et al. “Winning Arguments: Interaction Dynamics and Persuasion Strategies in Good-faith Online Discussions.” WWW 2016

[23] Wang et al. “Persuasion for Good: Towards a Personalized Persuasive Dialogue System for Social Good.” ACL 2019

[24] Wei et al. “Is This Post Persuasive? Ranking Argumentative Claims with Evidence.” ACL (Short Papers) 2016

[25] Xu et al. “‘The Earth Is Flat Because…’: Investigating LLMs’ Ability to Produce and Resist Persuasive Misinformation.” ACL 2024

[26] Yang et al. “Let’s Make Your Request More Persuasive: Modeling Persuasive Dialogues with Content and Process Control.” ACL 2019

[27] Zeng et al. “How Johnny Can Persuade LLMs to Jailbreak Their Own Guardrails.” ACL 2024